TikTok has officially rolled out a series of updates to its Community Guidelines, introducing significant shifts in how search results, comments, and AI-generated content are moderated across the platform.

Personalized Search and Comment Filtering

The platform has moved away from its previous stance on “search suggestions” to a more dynamic model. While older guidelines focused on relevance, the new rules state that both search results and recommendations are now uniquely tailored to each user. TikTok confirmed that it leverages individual data points, including past search history and watch behavior, to curate a personalized experience.

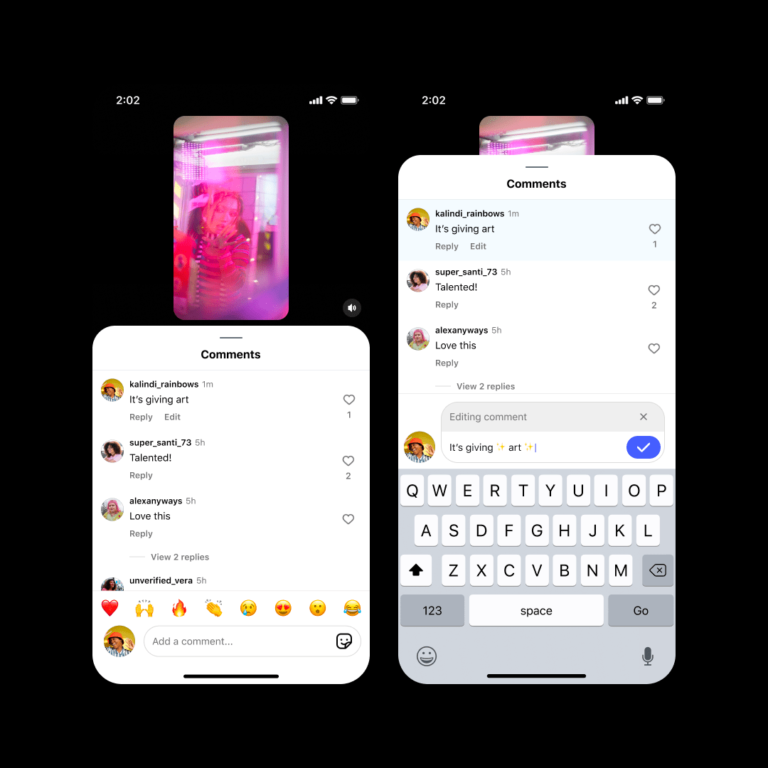

This logic extends to the comment section as well. TikTok now explicitly states that comments are sorted using algorithmic signals such as previous replies, user likes, and reporting history, ensuring that comment sections will look different from one user to the next.

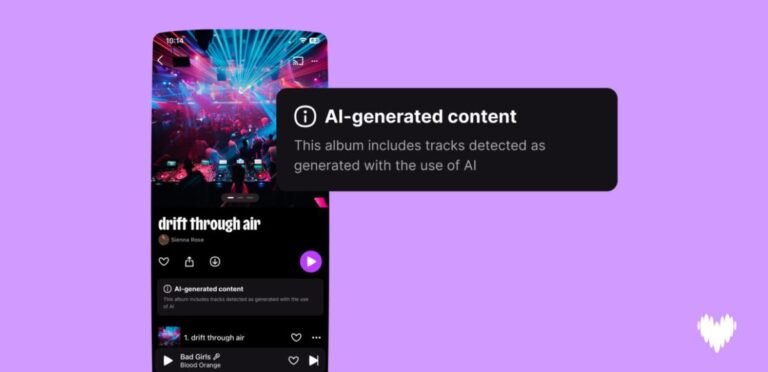

Shifting Stance on AI Content

The section on AI content has undergone a notable linguistic shift. Previously, the platform provided a detailed list of prohibited deepfake content, specifically banning material that faked authoritative sources, crisis events, or unauthorized endorsements of public figures.

This has been replaced by a broader, more concise mandate: TikTok now prohibits content that is “misleading about matters of public importance or harmful to individuals.” Interestingly, the specific mention of AI-generated endorsements has been removed, raising questions about whether the platform intends to integrate more celebrity-approved AI content in the future.

Changes to For You Feed and Moderation Philosophy

TikTok has also streamlined its For You feed (FYF) Eligibility Standards section. The previous, comprehensive list of FYF-ineligible content has been dismantled. These details are now dispersed throughout various sections of the updated guidelines, making it more difficult for creators to find a centralized reference for what content might be restricted.

Finally, the company has updated the language surrounding its moderation goals. Where the platform previously aimed to remain “safe, trustworthy, and vibrant,” it now describes its mission as maintaining a “safe, fun, and creative place for everyone.” The removal of the term “trustworthy” from this mission statement marks a subtle but distinct shift in the company’s stated priorities for platform integrity.